The Unitary Events Analysis¶

The executed version of this tutorial is at https://elephant.readthedocs.io/en/latest/tutorials/unitary_event_analysis.html

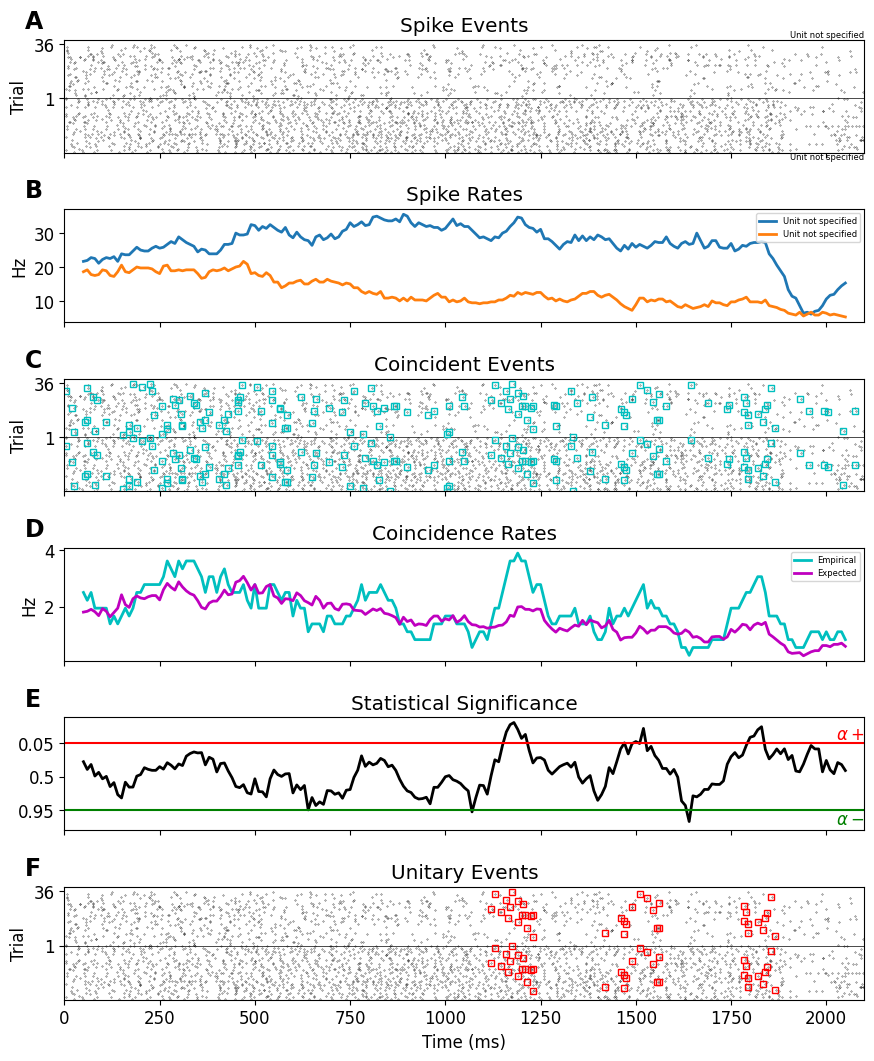

The Unitary Events (UE) analysis [1] tool allows us to reliably detect correlated spiking activity that is not explained by the firing rates of the neurons alone. It was designed to detect coordinated spiking activity that occurs significantly more often than predicted by the firing rates of the neurons. The method allows one to analyze correlations not only between pairs of neurons but also between multiple neurons, by considering the various spike patterns across the neurons. In addition, the method allows one to extract the dynamics of correlation between the neurons by perform-ing the analysis in a time-resolved manner. This enables us to relate the occurrence of spike synchrony to behavior.

The algorithm:

Align trials, decide on width of analysis window.

Decide on allowed coincidence width.

Perform a sliding window analysis. In each window:

Detect and count coincidences.

Calculate expected number of coincidences.

Evaluate significance of detected coincidences.

If significant, the window contains Unitary Events.

Explore behavioral relevance of UE epochs.

References:

Grün, S., Diesmann, M., Grammont, F., Riehle, A., & Aertsen, A. (1999). Detecting unitary events without discretization of time. Journal of neuroscience methods, 94(1), 67-79.

[1]:

import random

import string

import numpy as np

import matplotlib.pyplot as plt

import quantities as pq

import neo

import elephant.unitary_event_analysis as ue

from elephant.datasets import download_datasets

# Fix random seed to guarantee fixed output

random.seed(1224)

[build-24183385-project-26965-elephant:03246] mca_base_component_repository_open: unable to open mca_btl_openib: librdmacm.so.1: cannot open shared object file: No such file or directory (ignored)

Next, we download a data file containing spike train data from multiple trials of two neurons.

[2]:

# Download data

repo_path='tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix'

filepath=download_datasets(repo_path)

/home/docs/checkouts/readthedocs.org/user_builds/elephant/conda/latest/lib/python3.12/site-packages/elephant/datasets.py:154: UserWarning: No corresponding version of elephant-data found.

Elephant version: 1.2.0b1. Data URL:https://gin.g-node.org/NeuralEnsemble/elephant-data/raw/v1.2.0b1/README.md, error: HTTP Error 404: Not Found.

Using elephant-data latest instead (This is expected for elephant development versions).

warnings.warn(f"No corresponding version of elephant-data found.\n"

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 0.00B [00:00, ?B/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 0.00B [00:00, ?B/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 0.00B [00:00, ?B/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 8.00kB [00:00, 9.58kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 8.00kB [00:00, 9.58kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 8.00kB [00:00, 9.58kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 16.0kB [00:00, 19.2kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 16.0kB [00:00, 19.2kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 16.0kB [00:00, 19.2kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 48.0kB [00:01, 67.2kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 48.0kB [00:01, 67.2kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 48.0kB [00:01, 67.2kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 112kB [00:01, 167kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 112kB [00:01, 167kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 112kB [00:01, 167kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 192kB [00:01, 228kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 192kB [00:01, 228kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 192kB [00:01, 228kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 240kB [00:01, 262kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 240kB [00:01, 262kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 240kB [00:01, 262kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 440kB [00:01, 585kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 440kB [00:01, 585kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 440kB [00:01, 585kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 496kB [00:01, 552kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 496kB [00:01, 552kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 496kB [00:01, 552kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 576kB [00:01, 578kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 576kB [00:01, 578kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 576kB [00:01, 578kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 616kB [00:02, 461kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 616kB [00:02, 461kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 616kB [00:02, 461kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 712kB [00:02, 544kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 712kB [00:02, 544kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 712kB [00:02, 544kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 776kB [00:02, 460kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 776kB [00:02, 460kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 776kB [00:02, 460kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 784kB [00:02, 361kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 784kB [00:02, 361kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 784kB [00:02, 361kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 816kB [00:02, 335kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 816kB [00:02, 335kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 816kB [00:02, 335kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 832kB [00:02, 253kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 832kB [00:02, 253kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 832kB [00:02, 253kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 904kB [00:03, 340kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 904kB [00:03, 340kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 904kB [00:03, 340kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 936kB [00:03, 318kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 936kB [00:03, 318kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 936kB [00:03, 318kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 976kB [00:03, 320kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 976kB [00:03, 320kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 976kB [00:03, 320kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 0.99MB [00:03, 250kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 0.99MB [00:03, 250kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 0.99MB [00:03, 250kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.02MB [00:03, 253kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.02MB [00:03, 253kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.02MB [00:03, 253kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.05MB [00:03, 204kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.05MB [00:03, 204kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.05MB [00:03, 204kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.09MB [00:04, 233kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.09MB [00:04, 233kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.09MB [00:04, 233kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.10MB [00:04, 207kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.10MB [00:04, 207kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.10MB [00:04, 207kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.12MB [00:04, 204kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.12MB [00:04, 204kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.12MB [00:04, 204kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.13MB [00:04, 151kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.13MB [00:04, 151kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.13MB [00:04, 151kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.16MB [00:04, 153kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.16MB [00:04, 153kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.16MB [00:04, 153kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.18MB [00:04, 148kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.18MB [00:04, 148kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.18MB [00:04, 148kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.19MB [00:05, 100kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.19MB [00:05, 100kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.19MB [00:05, 100kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.21MB [00:05, 122kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.21MB [00:05, 122kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.21MB [00:05, 122kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.22MB [00:05, 81.5kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.22MB [00:05, 81.5kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.22MB [00:05, 81.5kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.23MB [00:05, 80.2kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.23MB [00:05, 80.2kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.23MB [00:05, 80.2kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.25MB [00:05, 90.5kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.25MB [00:05, 90.5kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.25MB [00:05, 90.5kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.26MB [00:06, 70.0kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.26MB [00:06, 70.0kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.26MB [00:06, 70.0kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.27MB [00:06, 69.0kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.27MB [00:06, 69.0kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.27MB [00:06, 69.0kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.27MB [00:06, 68.0kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.27MB [00:06, 68.0kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.27MB [00:06, 68.0kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.28MB [00:06, 67.3kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.28MB [00:06, 67.3kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.28MB [00:06, 67.3kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.29MB [00:06, 66.7kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.29MB [00:06, 66.7kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.29MB [00:06, 66.7kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.30MB [00:06, 66.2kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.30MB [00:06, 66.2kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.30MB [00:06, 66.2kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.31MB [00:06, 82.3kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.31MB [00:06, 82.3kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.31MB [00:06, 82.3kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.32MB [00:06, 77.5kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.32MB [00:06, 77.5kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.32MB [00:06, 77.5kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.34MB [00:07, 92.6kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.34MB [00:07, 92.6kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.34MB [00:07, 92.6kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.34MB [00:07, 84.6kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.34MB [00:07, 84.6kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.34MB [00:07, 84.6kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.35MB [00:07, 80.6kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.35MB [00:07, 80.6kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.35MB [00:07, 80.6kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.37MB [00:07, 95.5kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.37MB [00:07, 95.5kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.37MB [00:07, 95.5kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.38MB [00:07, 104kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.38MB [00:07, 104kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.38MB [00:07, 104kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.40MB [00:07, 111kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.40MB [00:07, 111kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.40MB [00:07, 111kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.41MB [00:07, 99.9kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.41MB [00:07, 99.9kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.41MB [00:07, 99.9kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.42MB [00:07, 109kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.42MB [00:07, 109kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.42MB [00:07, 109kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.44MB [00:08, 113kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.44MB [00:08, 113kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.44MB [00:08, 113kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.46MB [00:08, 137kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.46MB [00:08, 137kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.46MB [00:08, 137kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.48MB [00:08, 135kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.48MB [00:08, 135kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.48MB [00:08, 135kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.49MB [00:08, 134kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.49MB [00:08, 134kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.49MB [00:08, 134kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.51MB [00:08, 133kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.51MB [00:08, 133kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.51MB [00:08, 133kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.53MB [00:08, 151kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.53MB [00:08, 151kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.53MB [00:08, 151kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.55MB [00:08, 147kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.55MB [00:08, 147kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.55MB [00:08, 147kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.57MB [00:08, 144kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.57MB [00:08, 144kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.57MB [00:08, 144kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.59MB [00:09, 144kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.59MB [00:09, 144kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.59MB [00:09, 144kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.62MB [00:09, 158kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.62MB [00:09, 158kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.62MB [00:09, 158kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.64MB [00:09, 168kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.64MB [00:09, 168kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.64MB [00:09, 168kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.67MB [00:09, 194kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.67MB [00:09, 194kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.67MB [00:09, 194kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.68MB [00:10, 75.5kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.68MB [00:10, 75.5kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.68MB [00:10, 75.5kB/s]

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.69MB [00:10, 175kB/s]

</pre>

- Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.69MB [00:10, 175kB/s]

end{sphinxVerbatim}

Downloading https://datasets.python-elephant.org/raw/master/tutorials/tutorial_unitary_event_analysis/data/dataset-1.nix to ‘/tmp/elephant/dataset-1.nix’: 1.69MB [00:10, 175kB/s]

Load data and extract spiketrains¶

[4]:

io = neo.io.NixIO(f"{filepath}",'ro')

block = io.read_block()

spiketrains = []

# each segment contains a single trial

for ind in range(len(block.segments)):

spiketrains.append (block.segments[ind].spiketrains)

Calculate Unitary Events¶

[5]:

UE = ue.jointJ_window_analysis(

spiketrains, bin_size=5*pq.ms, win_size=100*pq.ms, win_step=10*pq.ms, pattern_hash=[3])

plot_ue(spiketrains, UE, significance_level=0.05)

plt.show()

/home/docs/checkouts/readthedocs.org/user_builds/elephant/conda/latest/lib/python3.12/site-packages/elephant/conversion.py:1130: UserWarning: Binning discarded 1 last spike(s) of the input spiketrain

warnings.warn("Binning discarded {} last spike(s) of the "